Data API : How to calculate top metrics

Data API : How to calculate top metrics

Data API : How to calculate top metrics

This document provides calculation methods for key performance metrics using the Moveworks Data API. All calculations are based on the Beta2 version of the API and use the actual response structures from the Moveworks platform.

The Moveworks Data API provides five main endpoints:

https://api.moveworks.ai/export/v1/records/conversationshttps://api.moveworks.ai/export/v1/records/interactionshttps://api.moveworks.ai/export/v1/records/plugin-callshttps://api.moveworks.ai/export/v1/records/plugin-resourceshttps://api.moveworks.ai/export/v1/records/usersThe API returns data in OData JSON format:

Please note: The following section refers to data tables such as Conversations, Interactions, and Plugin-calls. This assumes that you have already fetched the data from the API and stored it in your data lake.

Active users are defined as users who perform at least one interaction within a given timeframe.

Method 1 (Interactions-based):

user_id field.Method 2 (Users table-based):

latest_interaction_time falls within the timeframe.Shows the adoption trend of the AI Assistant over time.

access_to_bot = true from the users table.Tracks the percentage of users who return to the AI Assistant within a defined time window.

user_id values from the interactions table.user_id appears more than once in the timeframe, mark that user as retained.New users are those who interact with the AI Assistant for the first time within the selected timeframe.

first_interaction_time falls within the timeframe from the users table.first_interaction_time = latest_interaction_time.id values from the conversations API in the timeframe.route attribute.primary_domain attribute.Conversation topics are aggregated from interaction-level topic detection.

type = INTERACTION_TYPE_FREE_TEXTin the interactions table.detail.entity values by conversation ID.End users can provide feedback via thumbs clicks or feedback forms.

INTERACTION_TYPE_INTERNAL_LINK in the interactions table.detail.resource_id:

Helpful → Thumbs UpUnhelpful → Thumbs DownINTERACTION_TYPE_UIFORM_SUBMISSION.label attribute indicates a feedback form.detail.detail.detail.content.The end users can escalate their issues to live agent if they find the summarized response provided the AI Assistant as not helpful or request to connect with an agent directly.

name as “Start Live Agent Chat” plugin.true.interaction_id attribute and fetch the details from the interactions table.The interaction table will also capture if a end user has requested to connect with the live agent. There are two methods - Either go through the handoff or requesting to connect directly

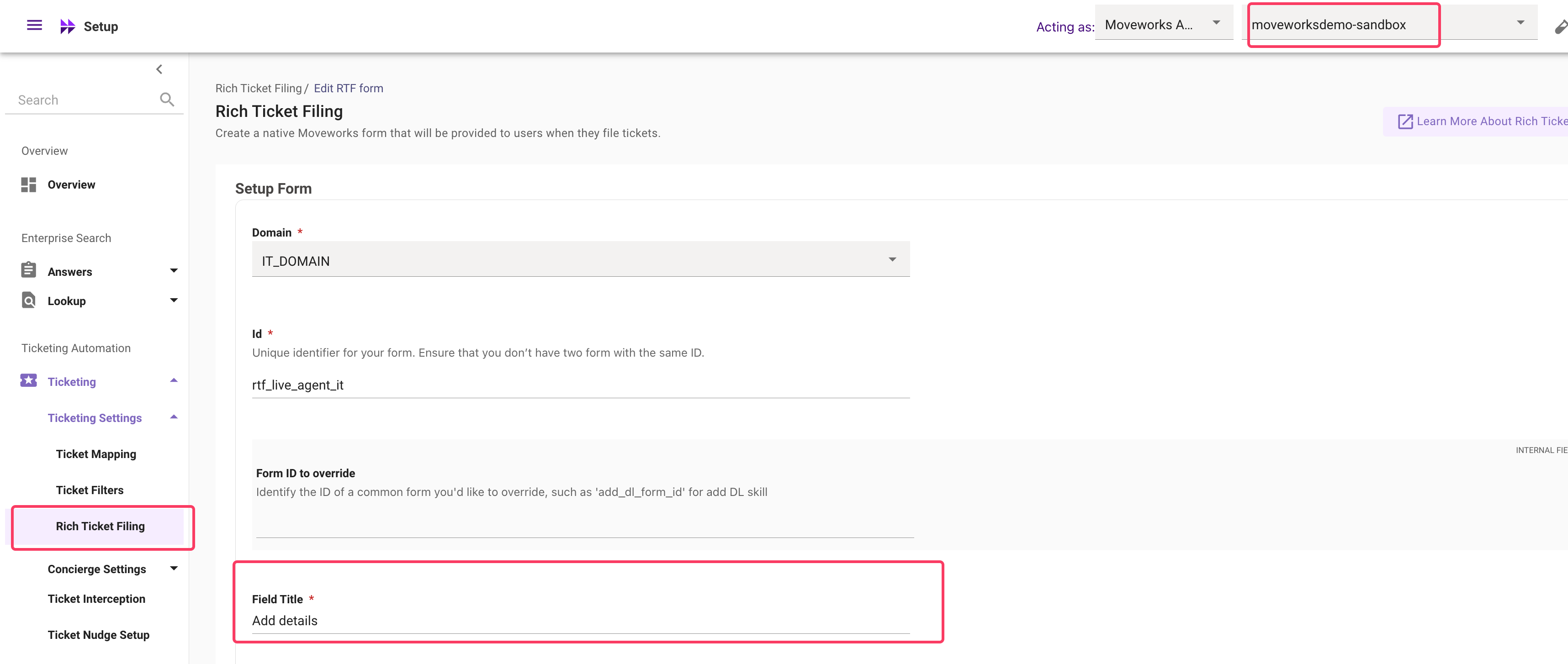

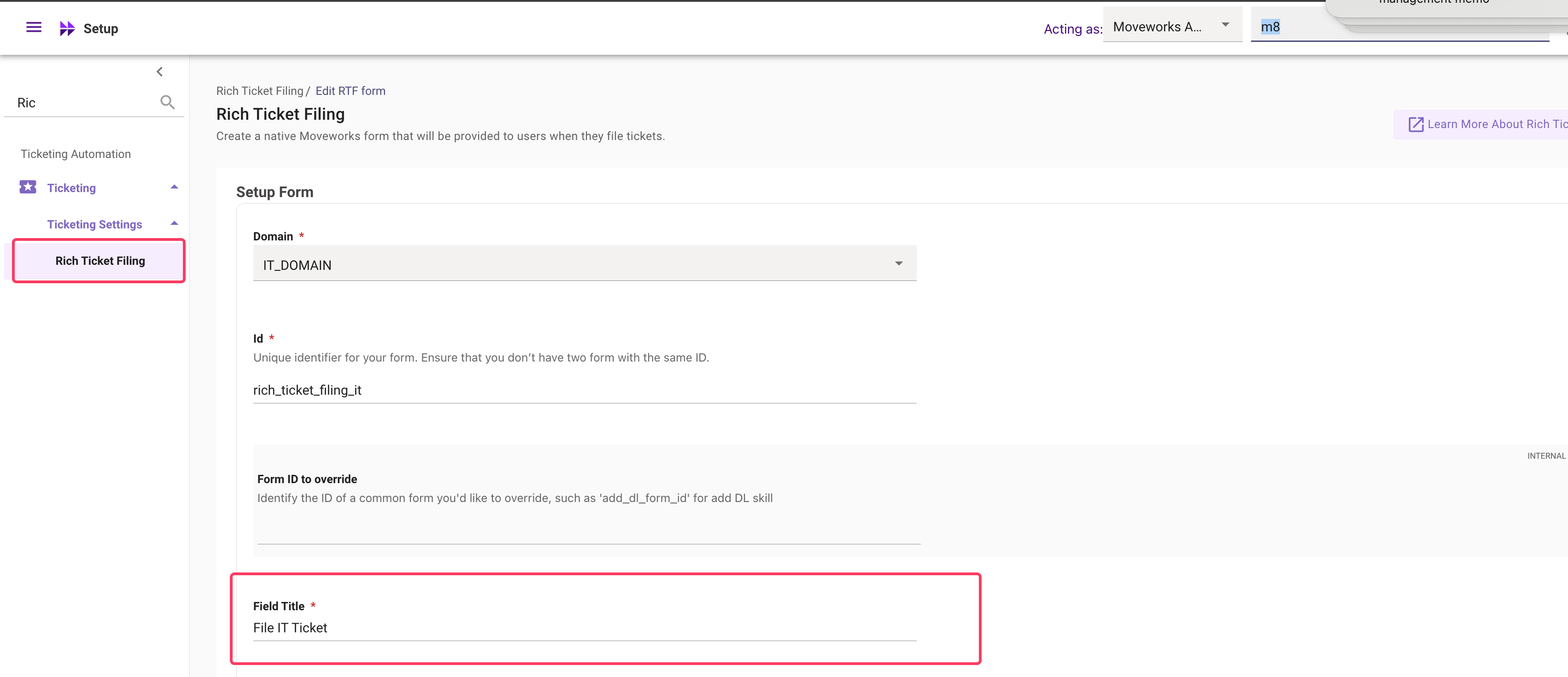

INTERACTION_TYPE_UIFORM_SUBMISSISIONand label = handoffor mw_formLive Agent chat request. However if you have configured a separate Rich Ticket Filing form - The form name will reflect the field called as Field Title

End users can file a ticket related to their issue if they do not find the AI Assistant as helpful. Similar to the above live agent request this can be tracked both via the plugin calls table and the interactions table.

name as “Create Ticket” plugin.true.interaction_id attribute and fetch the details from the interactions table.INTERACTION_TYPE_UIFORM_SUBMISSISIONand label = handoffor mw_formFile IT Ticket. However if you have configured a separate Rich Ticket Filing form - The form name will reflect the value in field called as Field Title

conversations table: All conversations where route = “Notification”conversations table: Get all conversation IDs where route = “Notification”.Interactions table where actor = userThis gives you all conversations that were engaged by the user.

Please note : Every notification will have a unique conversation ID.

conversations table: All conversations where route = “Notification”type field for getting the breakdown of notifications by their type.conversations table: Get all conversation IDs where route = “Notification”.Interactions table where actor = userThis gives you all conversations that were engaged by the user.

You can then breakdown these notifications by using the type field in the conversations table.

CSAT (Customer Satisfaction) data is exposed via the Data API as a specific notification conversation type, plus the user’s responses captured in the Interactions entity. The sections below cover what is provided in the CSAT data and the most common CSAT calculations.

CSAT data is split across two entities — a conversation row that represents the survey being sent, and a set of interaction rows that represent what the user did with it. There is no separate “CSAT” table; everything you need is reachable from the standard conversations and interactions entities.

Every CSAT survey sent to a user appears as a unique row in the conversations table. Identify CSAT surveys by:

route = "Notification"type = "CONVERSATION_TYPE_CSAT_NOTIFICATION"Key fields on a CSAT conversation row:

A row in conversations with the filters above represents delivery of a CSAT survey. The user’s response (or non-response) lives in the interactions table.

When a user interacts with a CSAT survey, one or more rows are written to the interactions table with the same conversation_id as the survey. There are two interaction shapes you should know about:

1. Star rating (button click)

The 1–5 star rating the user selects is captured as a button click.

Users can re-rate the same survey. Each click writes a new row, so multiple INTERACTION_TYPE_BUTTON_CLICK rows can share the same conversation_id. To get the final rating per user, take the latest row by created_time per conversation_id.

2. Free-text feedback (form submission)

If the user leaves a written comment alongside their rating, it is captured as a UI form submission.

Both rating and free-text feedback rows can be joined back to the originating CSAT conversation via conversation_id, and to the user via user_id.

A CSAT conversation with no matching actor = "user" rows in the interactions table represents a survey that was delivered but not engaged with. Use this to compute response rate (see below).

Counts every CSAT survey delivered to an end user in the timeframe.

conversations table.route = "Notification" and type = "CONVERSATION_TYPE_CSAT_NOTIFICATION".id values.Each CSAT survey is a unique conversation. Sent volume is driven by survey eligibility (active users not surveyed in the last 180 days) and the configured sampling rate.

The percentage of delivered CSAT surveys that the user actually responded to (rated and/or commented).

conversations table where route = "Notification" and type = "CONVERSATION_TYPE_CSAT_NOTIFICATION".interactions table, find rows whose conversation_id is in the set above and actor = "user". Count distinct conversation_id values.Breaks down CSAT responses by the 1–5 star rating the user selected.

route = "Notification" and type = "CONVERSATION_TYPE_CSAT_NOTIFICATION" from the conversations table.interactions table, filter for rows whose conversation_id is in that set and type = "INTERACTION_TYPE_BUTTON_CLICK".detail.content (the button name corresponding to the star rating).detail.content to get the distribution.Users can re-rate the same CSAT survey. If you want only the final rating per user, take the latest interaction (by created_time) per conversation_id.

Ingests the qualitative comments users leave alongside their rating.

interactions table where:

type = "INTERACTION_TYPE_UIFORM_SUBMISSION"label = "mw_form"detail.detail = "CSAT Feedback"detail.content.conversations on conversation_id to attribute the comment to the originating CSAT survey, and confirm type = "CONVERSATION_TYPE_CSAT_NOTIFICATION" if you want to exclude any free-text feedback that wasn’t from a CSAT campaign.The success of a plugin can be measured by evaluating when it was served and used, and comparing those outcomes against user feedback. You can measure this for a specific plugin or a list of plugin based on your requirement.

plugin-calls table using the plugin’s name attribute.served = trueused = trueinteraction_id attribute.type = INTERACTION_TYPE_UIFORM_SUBMISSIONlabel = feedbackparent_interaction_id against the interaction IDs from Step 3.A knowledge gap is considered when Knowledge base plugin is called but it is Unsuccessful (Served = false & Used = false)

name = “Knowledge Base”served = false and used = falseid column.detail.content attribute and the detected topic will be present under the detail.entity attribute.*The knowledge gap referenced here is prescriptive - You can adapt this to your own definition

To fetch the approvals processed via the AI Assistant search for plugin name as ‘Update Approval Record’ plugin and Served = true and Used = true.

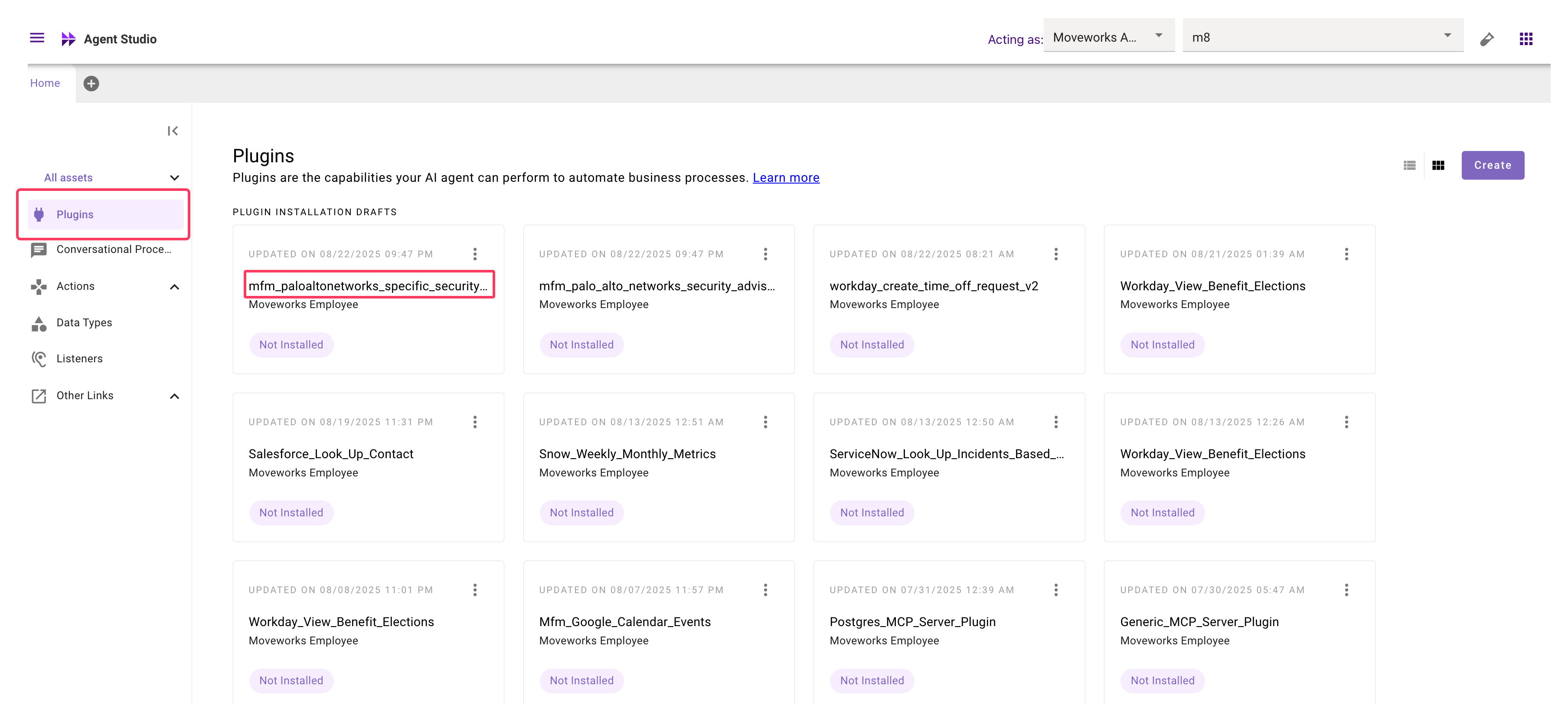

name = “Update Approval Record”served = true and used = trueid attributeplugin_call_idand enter the id’s fetched from the above step.If you built custom plugin or installed plugins from marketplace - These are included in the Data API details. The custom plugin will be referred in the plugin-calls table.

name attribute.To find the custom plugin name open to the Agent studio app and go through the plugins listed there.

These are prescriptive definitions. Please feel free to modify the calculations as per your own requirements.

Definition: Deflections are interactions where no ticket was filed, no live agent connection was requested, and no negative feedback was given by the end user.

type = INTERACTION_TYPE_FREE_TEXT and capture the id values.type = INTERACTION_TYPE_UIFORM_SUBMISSIONlabel = "handoff" or "mw_form"detail.detail = "File IT Ticket" (adjust based on your ticketing form name).type = INTERACTION_TYPE_UIFORM_SUBMISSIONlabel = "handoff" or "mw_form"detail.detail = "Live Agent chat request" (adjust based on your form name).type = INTERACTION_TYPE_UIFORM_SUBMISSIONlabel = "feedback"detail.resource_id = "Unhelpful".parent_interaction_id from steps 2, 3, and 4.Definition: Time savings are calculated based on the hours saved when specific requests are automated by the AI Assistant.

name = "Grant Software Access".served = true and used = true.interaction_id values that meet the above criteria.Definition:

Mean Time to Resolution (MTTR) is a key metric typically defined by the formula: (Sum of all resolution times)/Total resolutions

In the world of AI assistants, conversations are often multi-turn, involving several back-and-forth interactions between a user and the bot. Here’s how you can calculate MTTR in this context.

interaction_id and created_time for all interactions where the actor is the ‘user’ and the type is ‘INTERACTION_TYPE_FREE_TEXT’.actor is the ‘bot’ and the parent_interaction_id matches the user’s interaction_id from the previous step. Get the created_time for these bot interactions.created_time from the bot’s created_time.Optional: Excluding Escalations

The method above counts every bot response as a resolution. To get a more precise metric, you might want to exclude cases that were escalated to a human.

type of ‘INTERACTION_TYPE_UIFORM_SUBMISSION’ where the detail.detail contains “Live agent chat request” or “File IT ticket”.parent_interaction_id of these escalations.