The Moveworks AI Assistant is a general-purpose employee service tool, it is instructed to not engage on sensitive topics. In these scenarios, the expected behavior is for the bot to decline the request. Using machine learning, all incoming requests are analyzed for potential toxic or non-work appropriate content. Moveworks supplements GPT’s own toxicity check with a large language model that assesses appropriateness for work environments, and uses a policy that guides the Moveworks Assistant to not engage with the user if such a request is detected. In cases where the toxicity filter is triggered, the user will receive a message similar to I’m unable to assist with that request, and will not receive an acknowledgement of the issue.

Examples: Language that is hateful, abusive, derogatory or offensive.

In some cases, the Moveworks baseline toxicity default filter can be too broad. For example, an organization may want the AI Assistant to engage with users seeking mental health support, where surfacing relevant knowledge (such as Employee Assistance Program resources) is more beneficial than declining the request.

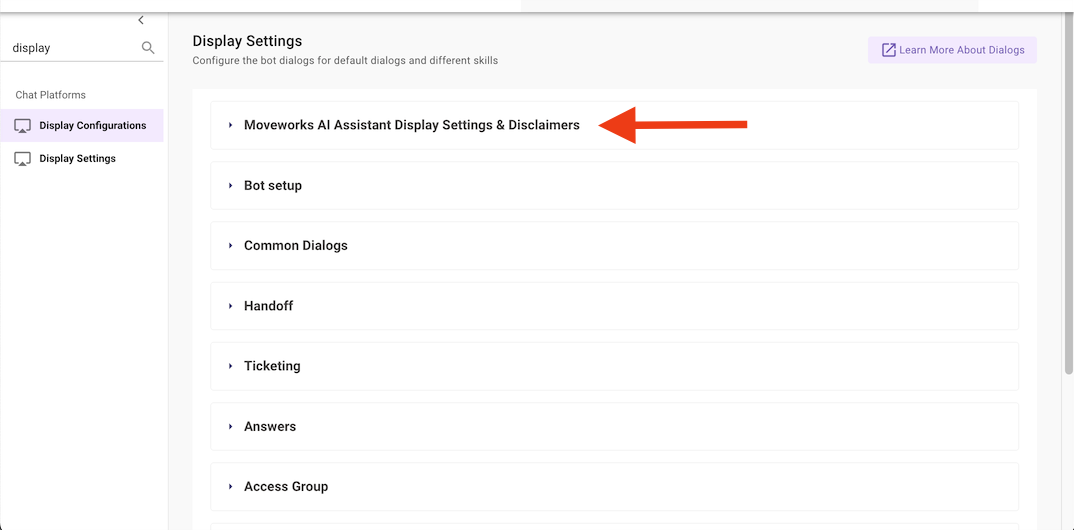

To handle these cases, Moveworks organizes its safety filter into a fixed set of content categories. By default, all categories are blocked. Admins can choose to allow specific categories so that the AI Assistant will engage with requests falling under those categories and surface the relevant knowledge.

The available content categories are:

Any category that is not explicitly selected remains blocked. The configured selections are applied to every future interaction with the Moveworks AI Assistant.

Note: By default, every organization starts with all content categories blocked. Allowing a category applies to all users interacting with the Moveworks AI Assistant, so select categories carefully based on your organization’s policies.

Before configuring allowed content categories, please gather the following:

Any category you do not select will remain blocked. Only select categories that your organization has reviewed and explicitly wants the AI Assistant to engage on.

A: This is a Moveworks specific toxicity check only. There is also the OpenAI or Azure toxicity check that gets applied, which Moveworks does not have control over.

A: All content categories remain blocked. This is the default behavior for every organization and provides the strongest safety posture.

A: No. The set of content categories is fixed and maintained by Moveworks. You can only choose which of the provided categories to allow.